During a workshop, we developed some rather broad questions surrounding the concepts of information and complexity in the sciences, especially looking at quantum physics, digital philosophy and philosophy of science. This is spiced up with some more metaphysical questions and some rants by well-known scientists, to provoke the reader’s imagination. References to the literature are given as a first starting point to develop answers to the questions.

Comments, answers and even more questions are very welcome.

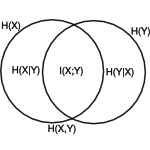

Questions part I - Information and Entropy [CT91]

- Is it possible to define a unifying universal notion of information, applicable to all sciences? [SW63]

Can we convert different notions of information and entropy? [Khi57]

Is the mathematical definition of Kullback-Leibler distance the key to understand different kinds of information? [KL51]“In fact, what we mean by information – the elementary unit of information – is a difference which makes a difference, and it is able to make a difference because the neural pathways along which it travels and is continually transformed are themselves provided with energy.” – Gregory Bateson, 1972

- Is it possible to define an absolute (non-relative, total) notion of information? [GML96]

Can we talk about the total information content of the universe? [Llo02] - Where does information go when it is erased?

Is erasure of information possible at all? [Hit01] - Does entropy emerge from irreversibility (in general)?

- Does the concept of asymmetry (group theory) significantly clarify the concept of information?

- What happens to information during computation?

References

- [CT91] T.M. Cover and J.A. Thomas, Elements of information theory, New York, 1991.

- [GML96] Murray Gell-Mann and Seth Lloyd, Information measures, effective complexity, and total information, Complex. 2 (1996), no. 1, 44–52.

- [Hit01] Hitchcock, is there a conservation of information law for the universe, 2001.

- [Khi57] A.I. Khinchin, Mathematical foundations of information theory, Dover Pubns, 1957.

- [KL51] S. Kullback and R.A. Leibler, On information and sufficiency, The Annals of Mathematical Statistics 22 (1951), no. 1, 79–86.

- [Llo02] Seth Lloyd, computational capacity of the universe, Physical Review Letters 88 (2002).

- [SW63] C.E. Shannon and W. Weaver, The mathematical theory of communication.

UPDATE 2010-10-13: added links to the references